We love David Mindell’s Digital Apollo: Human and Machine in Spaceflight. Mindell’s book tells the story of “human pilots, of automated systems, and of the two working together to achieve the ultimate in flight.” From our perspective, the book explains the link between what rocket scientists in the 1950s called “systems engineering” and what the cool kids in skinny jeans now call “design thinking.”

Whether we’re designing the first spacecraft to land on the moon, or the first phototherapy device intended for use in a low-resource hospital, the key is to start with a very clear idea of success. In the case of a rocket, the system is only successful if it brings the astronauts all the way home. In the case of a medical device, the system on works if people are willing to use it and able to successfully treat patients with it. There is no partial credit!

Another similarity is the role that we as designers imagine for the users. In the Apollo program, the two primary metaphors for the astronauts were “cowboys or cargo.” Would astronauts serve as the pilots of “dumb rockets” or as passengers in an autonomous vehicle?

As pilots, the early astronauts expected to have lots of instrument data and full control of the vehicle. Rocket engineers, on the other hand, were more comfortable developing fully autonomous systems. They realized that rockets could develop problems too quickly for the human response time.

Initially, [software engineer Alex] Kosmala pictured the spacecraft with one button: "The astronaut goes in, turns the computer on and says ‘Go to moon’ and then sits back and watches while we did everything." Another version has the computer running two programs—"P00" to go to the moon, and "P01" to return home. [David Mindell, Digital Apollo, p. 161]

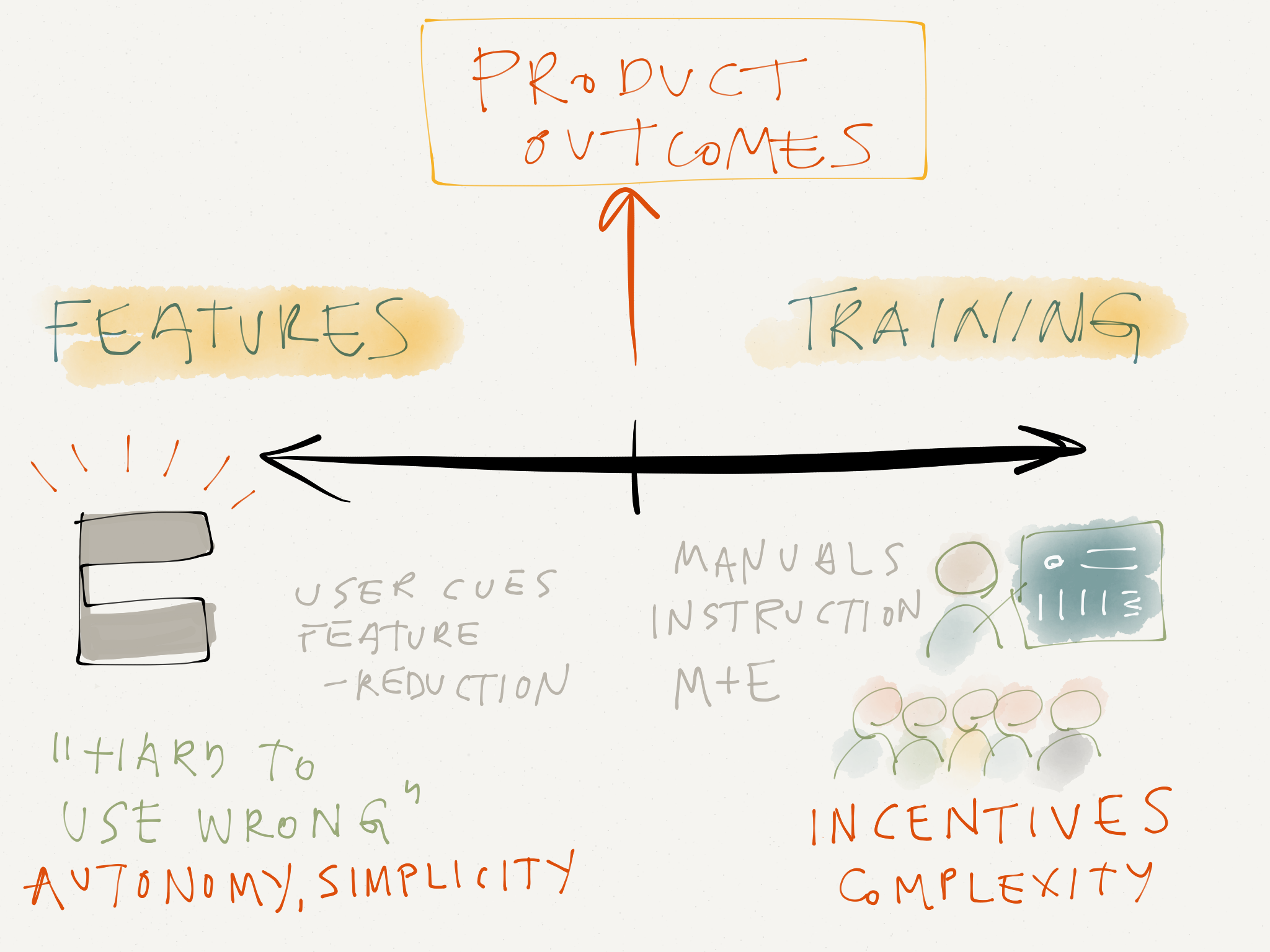

MIT Instrumentation Laboratory cartoon showing the extremes of automation. Too much automation leaves the astronauts bored, awaiting an abort, while too little overwhelms them with work. (Draper Laboratories/MIT Museum)

The Apollo system was ultimately a synthesis that combined autonomous systems with human inputs. It serves as an excellent metaphor for healthcare. Do we imagine caregivers in low-resource hospitals as “cowboys” who need lots of options and the ability to override medical device settings, or do we imagine them as “cargo,” overloaded with too many patients and not enough training and grateful for machines that can reduce their workload?

As designer thinkers, we see two options for guaranteeing our desired social impact outcomes with a given medical device: provide lots of training and incentives for appropriate behavior, or create product features that make a device intuitive and easy to use.

DtM’s overall philosophy of making medical devices “hard to use wrong” is really a statement about our expectations for users. In the same way Apollo rocket scientists realized that too little automation would place unrealistic demands on the astronauts, we see how medical systems that expect significant amounts of prior training and user expertise are poorly suited to the needs of rural hospitals in developing countries.

Mindell’s book includes loads of other ideas that have applications in social impact design. One is Apollo’s approach of “all-up testing”: only investing in tests of complete systems, rather than individually testing subsystems that might not work as well together. Another is what the Apollo team called “configuration discipline”: the rigorous documentation of system changes.

Fantastic book, check it out! And if you buy the book through the links in this email, Amazon will send part of the proceeds to DtM! [Digital Apollo]